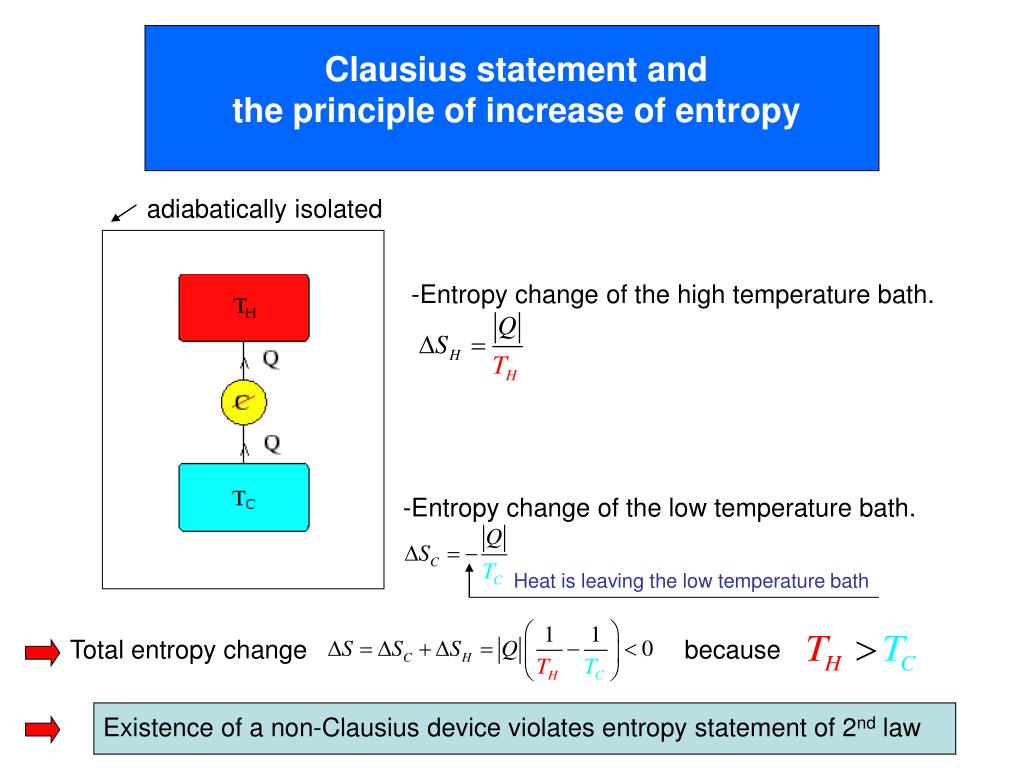

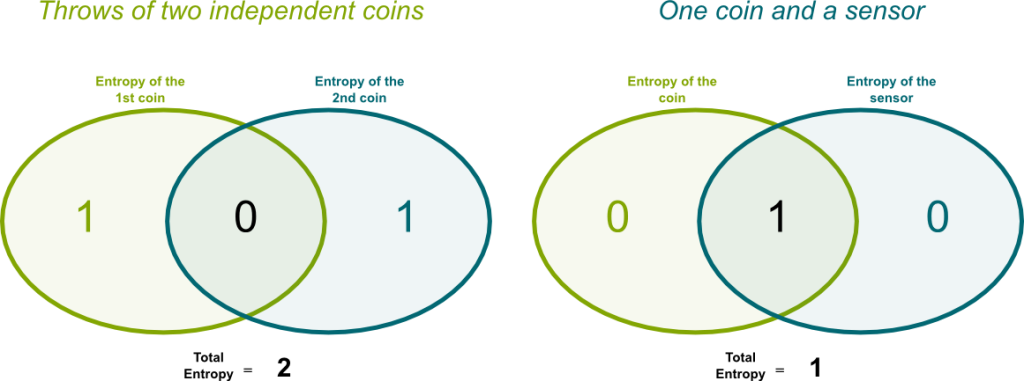

We will interchangeably use H(p) or H(X), where pis the probability distribution of X.  The entropy of a discrete random variable Xin base 2 (bits) will be denoted by H(X). But will serve as a decent guideline for guessing what the entropy should be. (1 x) will denote the binary entropy function with the convention that 0log 2 (0) 0. This type of rational does not always work (think of a scenario with hundreds of outcomes all dominated by one occurring \(99.999\%\) of the time). is exhibited by the fact that the entropy of a pair of random variables is the entropy of one plus the conditional entropy of the other. We can redefine entropy as the expected number of bits one needs to communicate any result from a distribution. The two formulas highly resemble one another, the primary difference between the two is \(x\) vs \(\log_2p(x)\). the expected surprisal) of a coin flip, measured in bits, graphed versus the bias of the coin Pr(X 1), where X 1 represents a result of heads. 'Dits' can be converted into Shannon's bits, to get the formulas for conditional entropy, etc. If instead I used a coin for which both sides were tails you could predict the outcome correctly \(100\%\) of the time.Ä®ntropy helps us quantify how uncertain we are of an outcome. Information is quantified as 'dits' (distinctions), a measure on partitions. Assumes A is an array-like of nonnegative ints whose max value is approximately the number of unique values present. For example if I asked you to predict the outcome of a regular fair coin, you have a \(50\%\) chance of being correct. def entropy(A, axisNone): '''Computes the Shannon entropy of the elements of A. The higher the entropy the more unpredictable the outcome is. Defn of Joint Entropy H() - S iS jp()log(p()) Continuing the analogy, we also have conditional entropy, defined as follows: Conditional.For joint distributions consisting of pairs of values from two or more distributions, we have Joint Entropy. Essentially how uncertain are we of the value drawn from some distribution. Conditional entropy and mutual information t together into the formula H(Y) I(Y X) + H(YjX): This has a nice intuitive meaning: we have decomposed the total ‘uncertainty’ in Y into an amount which is shared with X, given by I(Y X), and the amount which is independent of X, given by H(YjX). Just like with probability functions, we can then define other forms of entropy. Example of Conditional Entropy Conditional Probability, Information Theory, Thermodynamics, One Coin, Entropy. Quantifying Randomness: Entropy, Information Gain and Decision Trees EntropyÄ®ntropy is a measure of expected “surpriseâ€.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed